I wonder how this will affect the matrix.

AI has lost any respect it had for us.

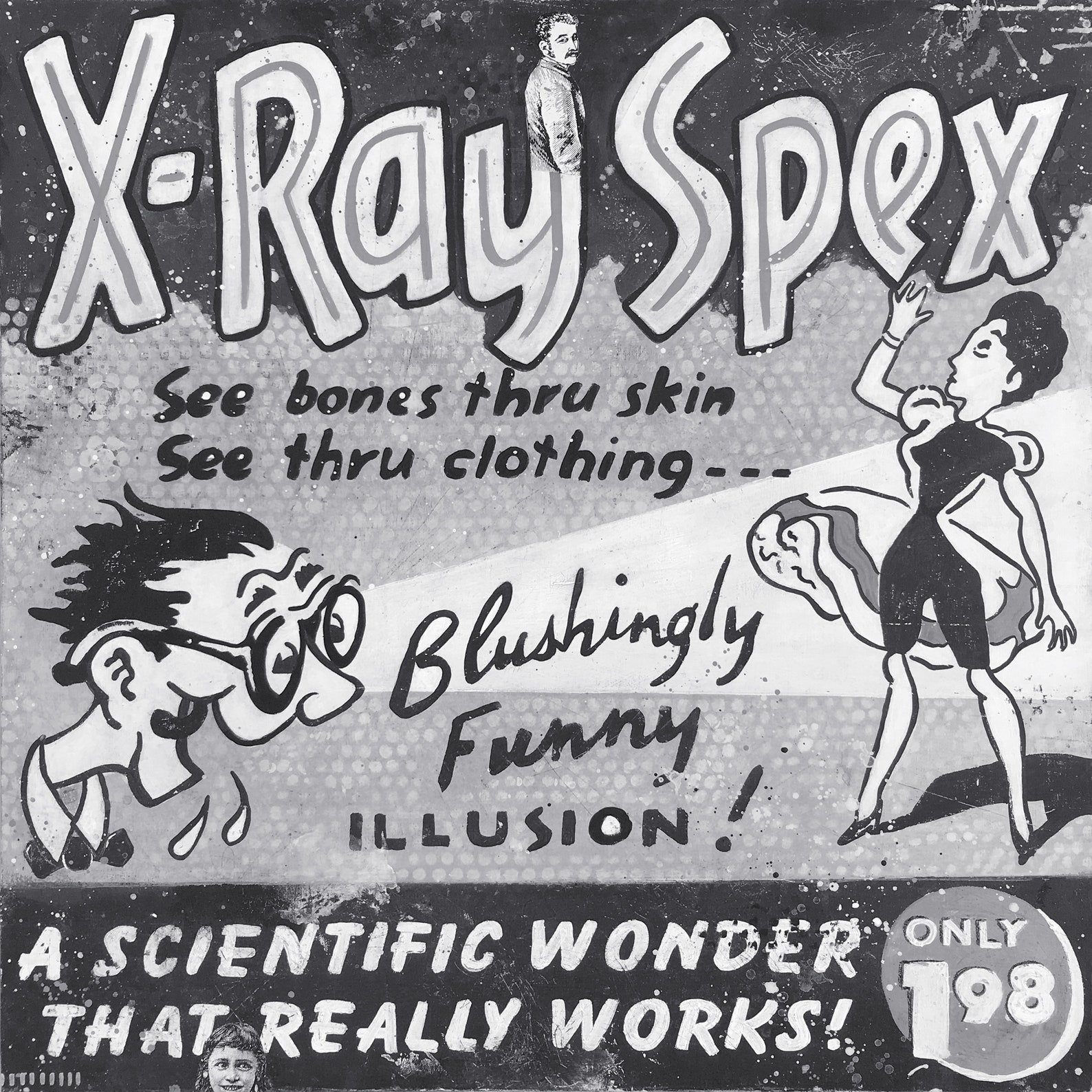

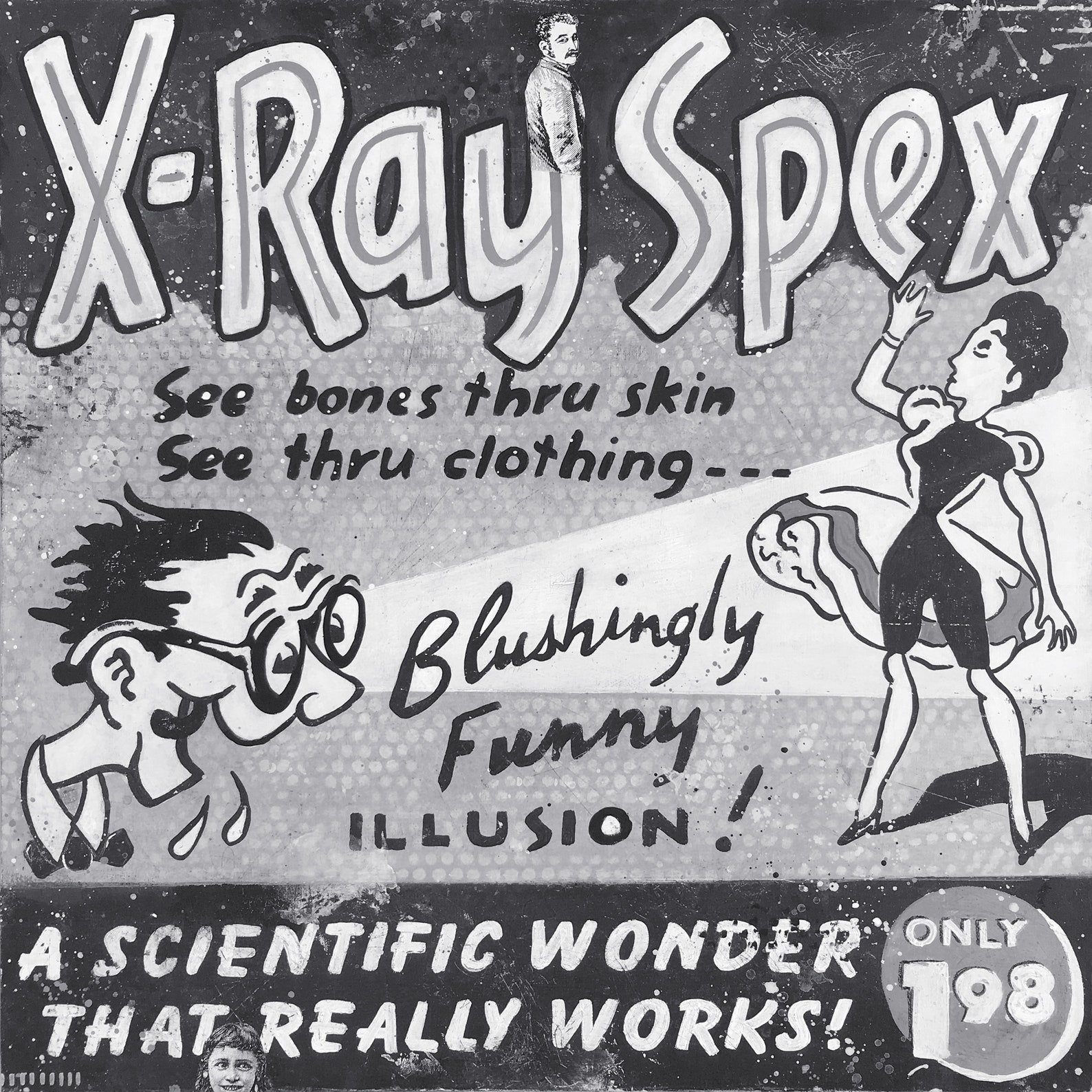

I remember the 50s and 60s comic books. Just about every one of them had an advertisement for “X-ray specs”. I was too poor to shell out the money for them, so I have no idea whether or not they worked.

Yeah, we’re going to hell alright.

Meh. You could do the same thing AI is doing here with photoshop. Before that people had to literally cut and paste images but that was still done sometimes too.

Wow. This is a sad development and new low for humanity.

Faceapp is cool though.

Nudifying the dressed has been done by the porn industry for decades. AI may take all the manual photoshopping work out of it, but the end product of what AI does is not new.

And I cannot see how AI can do a perfect job. For instance a dressed picture of a woman whose upper extremities appear large may in fact have a bra on that creates an enhanced appearance, where in fact those areas may be small. How does AI know the difference?

There are some actresses in TV shows that have hideous legs with no calves that amount to tight skin pulled over muscle and bone. How does AI sort that out?

There’s nothing perfect in AI regardless of the field of application.

Don’t give Hillary any ideas.

Wonderful.

I have come to the realization that AI is demonic.

The young are under satanic attack more than ever. If abortion and destruction of the family wasn’t enough, we now have open homosexual grooming, porn in schools and predatory teachers/professors, transgenderism, child trafficking, widespread prescription drugging, witchcraft and demon-themed toys, movies, video games and TV shows, and now this. And so many parents are clueless or don’t care, happy to let the state raise their children. The young generations are anxious, depressed and demoralized as this world destroys their bodies, lives and souls. Ripe for a heroic global figure to come and rescue them.

I don’t know the status of legal cases in this area, but one of the issues in the recent actors’ strike was control over the rights concerning AI manipulation of actors’ images in future projects. That might be an issue to track closely because actors’ images and personal reputations/personas are, along with their acting ability, their stock in trade. Careers are on the line. For the big name actors, a lot of money is on the line. For the lesser known actors, the fear is that one’s image might be captured in the course of a very minor role, perhaps just as an extra working for a day or two at the minimum day rate as a background character on a film, and from that point on, your “career” is taken over entirely by an AI avatar. Movie producers pushing AI avatars down porn alley is just one of the possibilities. The studios are big targets with deep pockets, and a union is involved. The battlelines are already drawn and the test cases will surely unfold.

Deepfakes and the apps discussed in the linked article are generalizations of these same technologies to the mass market. Any and all of us are potential targets. But the underlying issues are the same. Who has the rights to our images? When and where were photographs taken? For what purposes may they be legitimately used? Is our consent required? Can someone come across a copy of a family photograph of me taken 30 years ago and use it without my consent? If I post a photograph of myself online, I have presumably put it into the public domain, but in that case I have posted a particular photograph, fully clothed and engaged in some innocuous activity. Can such a photograph be altered to present something different? These complications go on and on and on.

In prior lives, I have had occasional involvement in producing newsletters, conference materials and myriad other projects that often involve group photos at various events. The organizations I’ve worked for were absolutely scrupulous about getting express written consent of every person in every photograph before we used it, the exceptions being historical photographs already in the public domain and crowd photos at widely attended public events.

New organizations have to develop their own protocols in this area. I have always been a very low profile, back office type person — I would rather write the speech than deliver the speech — but I live in Washington, DC and I have been at enough events over the years to have been on the front page of major newspapers a few times. Sometimes permission was asked; sometimes not. I don’t have any objections to the photo editors’ calls in those situations. But the circumstances get tricky.

My point is, there is a lot of law already surrounding these subjects. There are some guidelines already in place, and those almost certainly need to be tightened given the advances in technology. Any business, online or not, that manipulates images submitted to it by another party is trackable and could be held liable if express written consent is not given by whoever holds the rights to those images — and any substantive alteration of a photograph (as opposed to sharpening or enhancing a poor quality image while leaving it otherwise unchanged) would presumably require the consent of the person in the photograph. Any business doing unauthorized alterations could and should be sued to oblivion, and be subject to criminal charges as well.

Apps that allow individual users to make such alterations themselves would be harder and maybe impossible to control, but any downstream sharing or sale of altered images is in principle trackable and could/should be actionable. Web based platforms that transmit or host such images could be held liable if they transmit or display any image lacking legal permission from the person in the photo. Sue them to hell and back.

I have no idea where this will end up, but my sense of where it is trending is that we will end up with a legal regime in which every platform in the online world, including people’s individual websites (even if they are “private”), will be treated as a publisher with the owners responsible for dotting the i’s, crossing the t’s, and obtaining legal consent for every image.

That would end social media as we know it.

Which might be a good thing.

It will probably also end all but the most notional idea of online anonymity. Most of us here already understand that we are not really anonymous online if BigGov or BigTech wants to find us. “Hunter Biden’s laptop.” “Jeffrey Epstein didn’t kill himself.” There ... I’ve just created two more data points on some tracking system somewhere, and I’m just glad that I didn’t bother to walk down to the Capitol Building on January 6 to see what was going on, because my cellphone would have been logged and I might be a suspected insurrectionist. There is no reason in principle why the privacy veil shouldn’t be stripped immediately upon the first complaint if a falsified photo begins to circulate. The law should not be constructed to protect the porn industry, including the amateurs.

This concept is very scary, very scary indeed.

DAYS OF LOT: ‘Nudify’ Apps That Use AI to ‘Undress’ Women in Photos are Soaring in Popularity

Remember the second shortest verse in the Bible.

Whatever can be used for good can be perverted, but damnation is sure for the impenitent